Direct-to-Chip vs. Immersion Cooling: Navigating the Liquid Transition

Apr 6, 2026

Think about how hot your laptop or smartphone gets when you are running a heavy application or playing a high-resolution game. Now, multiply that intense heat by millions and pack it all into a single room.

That is the exact challenge modern data centers are facing today. Thanks to the explosive growth of artificial intelligence and high-performance computing, servers are working harder and running hotter than ever before. For years, blowing cold air over the components was enough to keep things running smoothly. But just like a small desk fan cannot cool down an overworked laptop, traditional air cooling increasingly struggles to keep up with the highest-density workloads. The industry is increasingly converging on the need for liquid cooling. It is no longer just a futuristic luxury, it is rapidly becoming essential for high-density environments.

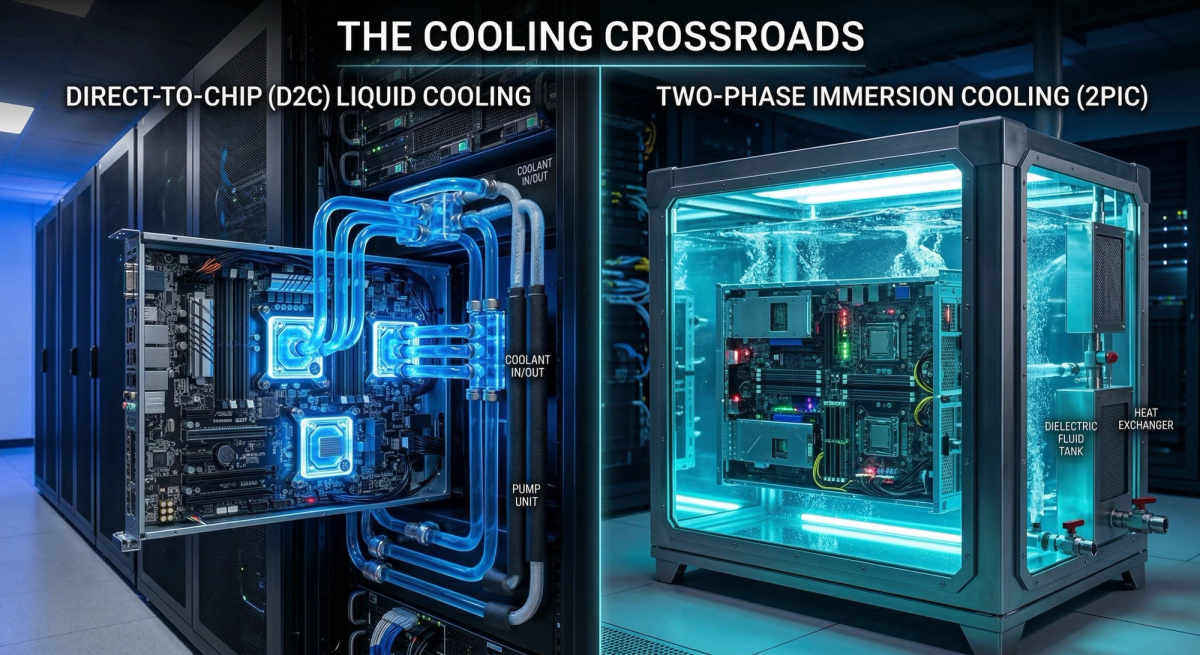

Swapping air for liquid is not a simple upgrade, however, and the facility operators running these data centers are now standing at a major crossroads. They must choose between two fundamentally different approaches to get the job done: Direct-to-Chip (D2C) cooling or Immersion cooling.

Both methods rely on the same basic science, since liquid transfers heat far more efficiently than air, but that is where the similarities end. The upfront costs, the way the equipment is installed, and the day-to-day maintenance required differ significantly. For operators tasked with scaling their infrastructure without introducing paralyzing bottlenecks for their teams, understanding these differences is critical.

Here is an objective, data-driven look at how Direct-to-Chip and Immersion cooling stack up, and why D2C currently represents the most practical and widely deployable option for many enterprise environments.

The Architectures: Targeted Delivery vs. Total Submersion

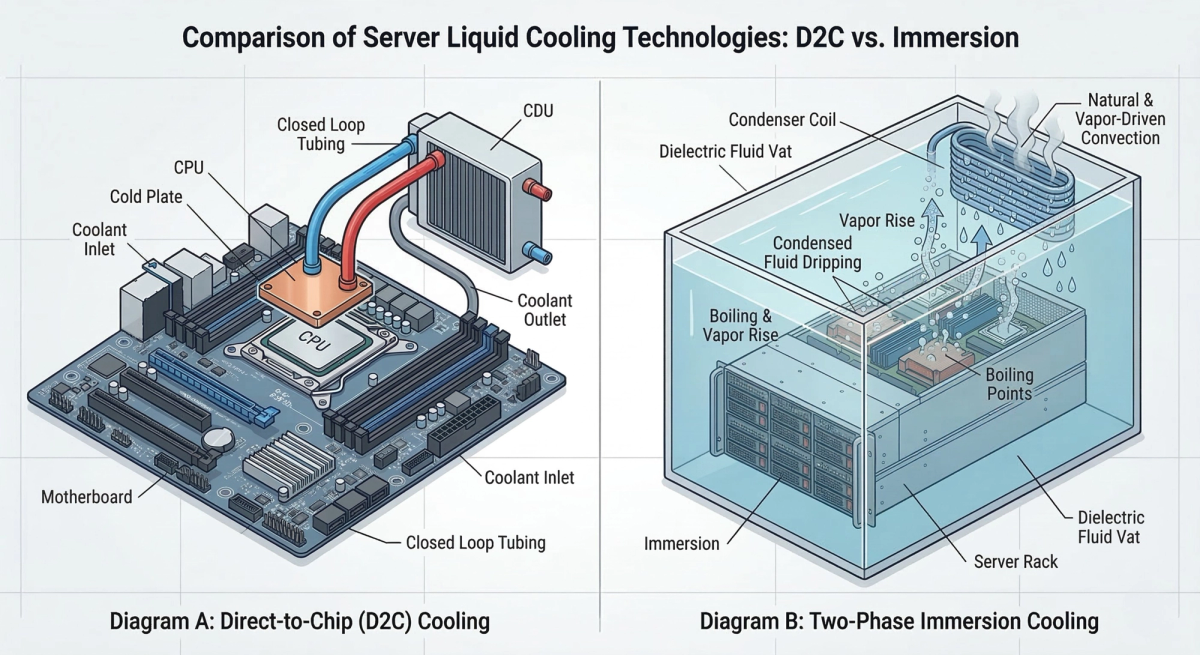

To understand the operational divide, it helps to look at how these systems interact with the hardware.

Direct-to-Chip (D2C) Cooling functions like a targeted IV drip, delivering a precise flow of cooling fluid directly to the vital organs of the server, the CPUs and GPUs, via engineered cold plates. This method effectively captures 70% to 75% of the server's thermal load right at the source. The remaining ambient heat is managed by standard, low-speed fans or rear-door heat exchangers (RDHx). D2C comfortably supports high-density racks drawing between 30 kW and 100 kW.

Immersion Cooling, by contrast, is akin to placing the entire patient in a sensory deprivation tank. The entire server chassis is submerged in a vat of non-conductive dielectric fluid. This approach generally falls into two categories. The first is single-phase immersion, where the cooling fluid remains a liquid as it absorbs heat and is pumped away to be cooled. The second is two-phase immersion, where the heat from the components actually causes the fluid to boil and turn into a vapor to carry the heat away. In both setups, the fluid absorbs nearly the entire thermal load, completely eliminating the need for server fans. While immersion can support extreme densities of 50 kW to 250 kW per rack, it requires a complete reimagining of the data center floor.

Hardware Compatibility and the Maintenance Penalty

The most profound divergence between the two technologies lies in hardware compatibility and the realities of day-to-day facility maintenance.

D2C architectures are designed to preserve standard data center topology. Servers are housed in traditional Electronic Industries Alliance (EIA) 19-inch vertical racks. If a component fails, a technician can walk down the aisle, perform a hot-swap, and replace the part using established operational protocols. Crucially, major original equipment manufacturers (OEMs) design their advanced silicon specifically for D2C environments, ensuring that expensive hardware warranties remain intact.

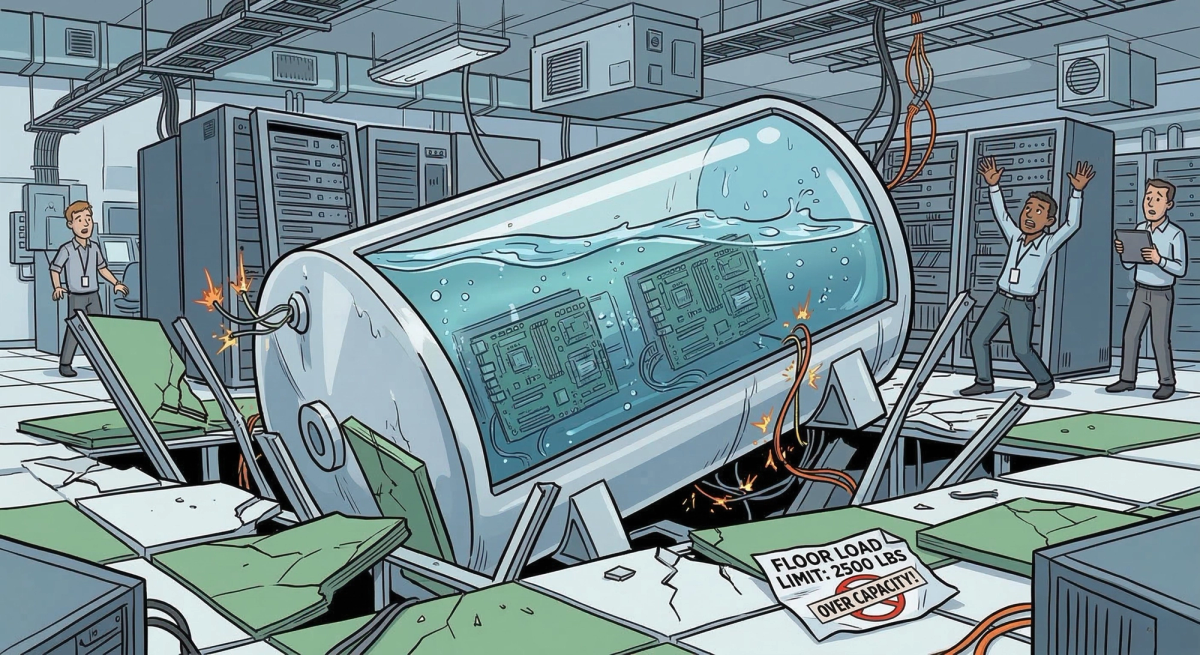

Immersion cooling fundamentally disrupts this model. Standard vertical racks are replaced by heavy, horizontal vats. While immersion can be highly effective for brand-new, purpose-built "greenfield" facilities, retrofitting a traditional "brownfield" data center for immersion is somewhat like trying to turn a standard commercial office building into a public aquarium; the floor-loading capacities simply were not designed to support the massive weight of fluid-filled tanks.

Furthermore, routine maintenance in an immersion environment is highly complex. Replacing a failed graphics accelerator often requires specialized lifting or handling equipment to hoist a dripping server out of a dielectric bath. This drastically increases the mean time to repair (MTTR) and requires highly specialized training. Additionally, while major OEMs are beginning to offer specific "immersion-ready" servers, submerging standard IT equipment often requires physically modifying them (such as removing fans and changing thermal pastes). This immediately voids standard OEM warranties and severely restricts your hardware choices.

Efficiency Gains: Diminishing Returns at a High Cost

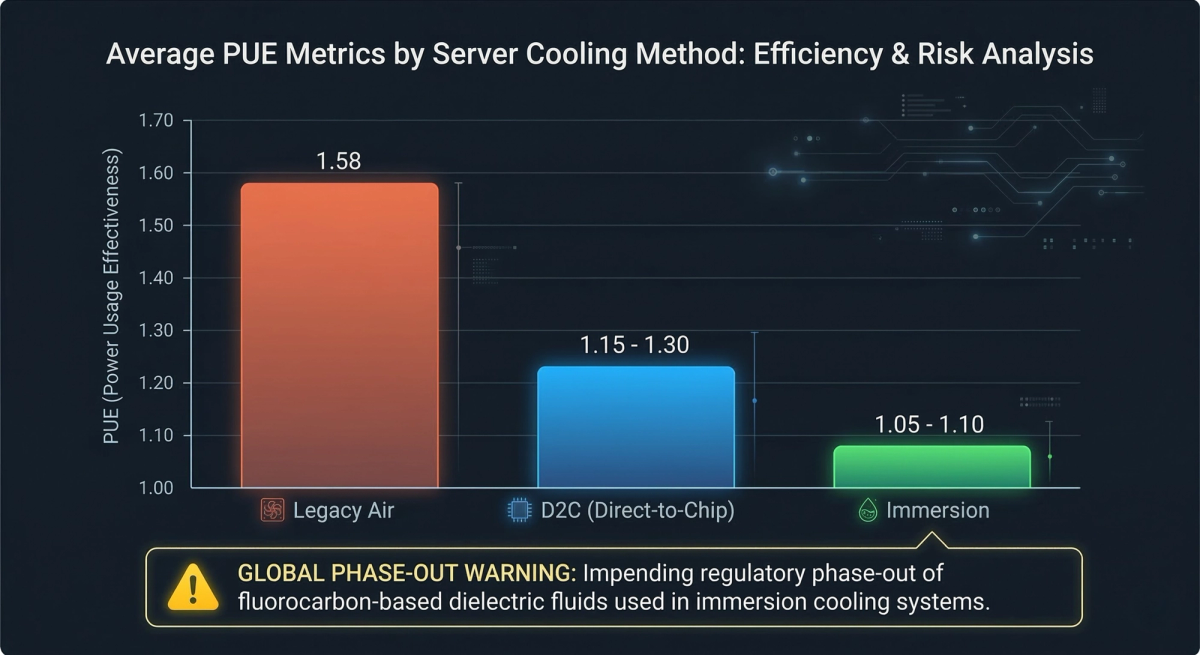

Both systems offer massive improvements in Power Usage Effectiveness (PUE) over legacy air cooling, but the margins between them are nuanced.

PUE Benchmarks: D2C configurations routinely achieve highly efficient PUE ratings between 1.15 and 1.30. Single-phase immersion cooling can push that number slightly lower, often between 1.05 and 1.10, by eliminating server fan power entirely.

The Fluid Reality: The slight efficiency gain of immersion comes with a steep fluid penalty. Immersion systems rely heavily on expensive dielectric fluids. While single-phase systems (which represent the majority of immersion deployments) use mineral oils or synthetic hydrocarbons, high-performance two-phase systems often rely on fluorocarbons. These fluorocarbon-based fluids are facing increasing regulatory scrutiny and potential restrictions in several regions due to environmental toxicity concerns. D2C, conversely, utilizes readily available, environmentally manageable water and glycol mixtures.

Cost-Benefit Analysis: CapEx and Scalability

When evaluating total cost of ownership (TCO), operators must balance initial capital expenditure (CapEx) against long-term scalability.

The Direct-to-Chip Advantage: D2C offers a modular, phased approach to high-density cooling. Operators can integrate coolant distribution units (CDUs) and secondary piping loops into existing raised-floor facilities without overhauling the building's structural engineering. This allows facilities to scale their CapEx predictably alongside compute demand, effectively extending the life of legacy data centers.

The Immersion Barrier: Immersion cooling requires an immense upfront CapEx investment for retrofits. Beyond the structural reinforcements needed to bear the weight of the tanks, the dielectric fluid itself is a massive line item, often costing hundreds of dollars per gallon. A single leak or contamination event can result in significant financial loss.

Ultimately, for most enterprise operators, the marginal 3–8% PUE improvement offered by immersion does not offset the operational and structural trade-offs required to achieve it.

The Reliability Engine Mandate: Why D2C Wins

When weighing efficiency gains against hardware compatibility and deployment costs, the consensus among enterprise infrastructure architects remains clear. Direct-to-chip cooling is the most scalable, pragmatic solution for managing advanced AI workloads. But deploying the hardware is only half the battle, because realizing its absolute peak performance requires chemical perfection.

Reliability Engine ensures your cooling fluid is maintained at a flawless, dynamically optimized equilibrium. This eliminates microscopic thermal bottlenecks to consistently drive higher tokens per watt. Paired with this level of intelligent fluid management, D2C delivers the extreme heat transfer capacity demanded by next-generation silicon, all without sacrificing standard rack topologies, paralyzing your maintenance workflows, or restricting your hardware choices.

At Reliability Engine, we understand that deploying D2C is only the beginning. The true operational mandate is maintaining continuous fluid health. We empower facility teams to safely maximize their compute density by managing the precise chemistry of their cooling loops.

References

Grand View Research: Immersion Cooling Market Size & Share Industry Report 2030

Global Market Insights: Direct-to-chip Liquid Cooling Market Size, Share, Analysis – 2034

Baltimore Aircoil Company: Energy and Water Efficiency Metrics in Data Center Cooling

Open Compute Project (OCP): Liquid Cooling TCO Comparison for the AI Data Center

Mordor Intelligence: Data Center Immersion Cooling Market Size, Growth, Competition 2025 – 2031